This poster is published under an

open license. Please read the

disclaimer for further details.

Keywords:

Cancer, Observer performance, Mammography, Breast

Authors:

K. Schilling, J. The, S. Griff, L. Oliver, R. Mahal, M. Saady, M. V. Velasquez; Boca Raton/US

DOI:

10.1594/ecr2015/C-1281

Results

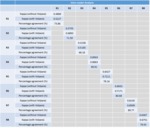

The overall visual BI-RADS distributions for each reader and the VDG distributions are shown in Table 1,

without (top panel) and with (bottom panel) the aid of Volpara.

Without the aid of Volpara,

there was considerable variability in the proportion of women assigned into each BI-RADS category.

For example,

without the software aid,

the percentage of women assigned as BI-RADS 1,

BI-RADS 2,

BI-RADS 3 and BI-RADS 4 ranged from 3.4 – 27.3%,

47.7 – 71.6%,

17.0 – 35.2%,

and 0 -6.8%,

respectively.

When the VDG scores from Volpara were available,

the range in the proportion of women allocated into each category by the eight readers appeared to be significantly reduced i.e.

8.0 – 18.2%,

46.6 – 62.5%,

22.7 – 34.1%,

and 3.4 – 10.2%,

respectively.

In comparison,

Volpara assigned 18.2,

40.9,

28.4 and 12.5% of women into each BI-RADS category,

respectively.

The percentage agreement between each pair of readers,

using a four-category density scale is shown in Table 2,

without (top panel) and with (bottom panel) the aid of Volpara.

On average,

the agreement between pairs of readers improved by approximately two percentage points when the software aid was used.

Table 3 demonstrates the level of agreement without (top panels) or with (bottom panels) using kappa statistics.

The use of quantitative VBD significantly improved inter-reader agreement in radiologists’ assessment of breast density (p=0.0374) with a mean kappa without VDG of 0.5664 and with VDG of 0.6266.

Although the above results demonstrated tha t use of a density software aid can improve the agreement between readers,

the effectiveness of the aid depends largely on the willingness of the reader to amend their scores based on an objective measure of density.

Table 4 outlines the intra-reader agreement for each individual reader without and with the software aid.

Although there is always some inherent intra-reader variability,

these results suggest that some readers are fairly confident in their own readings ,

while some are more amenable to changing their density readings.

For example,

having access to the objective scores resulted in reader 4 only changing their final density assessment in less than 10% of cases,

whereas reader 3 changed their density assessment in over 30% of cases.