This retrospective study was approved by the ethics committee of our institution,

and informed consent was not required.

A factorial design with repeated measures was used in this study.

The reliability refers to the reproducibility or agreement in measurements of the variables for each case rated by different observers (i.e.,

intradevice reliability),

or rated by each observer using different treatments (i.e.,

interdevice reliability). The agreement assessment can be achieved using the kappa statistic.

1.1. Sample and standard:

Patients with symptoms of acute stroke,

who attended emergency services for acute stroke evaluation between 2013 and 2016 at the Fundación Santa Fe de Bogotá University Hospital (FSFBUH),

were included.

Our hospital is a referral board certified stroke center.

The age of patients ranged from 30–97 years,

with a mean age of 71.6 years old (standard deviation of 15.1),

65 male and 72 female.

The patients were randomly selected without repetition.

Cases with images artifacts were excluded.

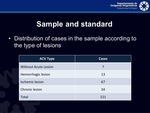

The distribution of cases in the sample according to the type of lesions is shown in Table 1 .

The cases consisted of brain CT examinations stored in our hospital PACS.

They were acquired using a General Electric LightSpeed 64 slice CT scanner (General Electric Healthcare,

GE Medical Systems,

Milwaukee,

WI,

USA),

with 100 kV,

10 mAs,

axial:5 mm,

sagittal: 3 mm,

FOV: 26 cm,

pixel spacing: 0.469,

matrix: 512 x 512,

pitch: 0.984:1,

and signed 15-bit pixel depth.

2.

Observers and reading observed variables:

Five neuroradiologists (three with more than ten years of experience) and one fellow neuroradiologist,

were selected as the observers.

They were asked to classify the type of the stroke lesion (i.e.,

hemorrhagic lesion,

ischemic lesion,

chronic lesion,

or without acute lesion).

Further,

according to the selected lesion type,

the radiologist classified other values,

as contraindications to the tPA administration for acute ischemic lesions,

the confidence on the presence of the hyperdense intracranial artery (HIA),

and the ASPECTS score (ranged from 1–10).

3.

Display monitors and viewer software:

According to the American College of Radiology (ACR) guidelines for teleradiology,

small matrix size images,

such as CT,

must be visualized on a monitor with a minimum of 512 x 512 matrix size at a minimum 8-bit pixel depth,

for processing or manipulation with no loss of matrix size or bit depth at display.

In addition,

the Digital Imaging and Communications in Medicine (DICOM) standard recommends the use of monitors calibrated to a maximum luminance of 400-500 cd/m2.

Therefore,

we used two display with these specifications.

The routine system for CT readings in our hospital is to use a PACS workstation with a DICOM-compliant viewer software Agfa IMPAX 6.5 (AGFA HealthCare,

Mortsel,

Belgium).

Images are displayed using an E-2620 BARCO monitor (BARCO N.V,

Kortrijk,

Belgium),

which is a 2-megapixel (MPx) LCD medical grayscale display,

DICOM-compliant,

dot pitch of 0.249 mm,

a spatial resolution of 1600 x 1200 pixels,

a maximum luminance of 700 cd/ m2,

and a 8-bit grayscale.

This system,

hereafter referred to as MEDICAL-IMPAX,

was used as the reference reading system in this study.

For the mobile option,

an Apple iPad Pro 9.7 MLMN2CL/A (Apple Inc.,

Cupertino,

CA,

USA),

with a “retina” display of 9.7 inches,

dot pitch of 0.096 mm (264 dpi),

a spatial resolution of 1536 x 2048 pixels,

and a maximum luminance of 500 cd/m2 was selected.

The viewer software used on this tablet was the Agfa XERO Viewer 3.0 (Agfa HealthCare,

Mortsel,

Belgium) software,

hereafter referred to as TABLET-XERO.

4.

Data Analysis:

Patients with ASPECTS score less than seven are not eligible to receive the tPA treatment.

Therefore,

we dichotomized the ASPECTS score into two categories as follows: 0 if the score was ranged from 1–6 (which is an indicator to not administrating the tPA treatment),

and 1 if the score was ranged from 7–10 (which is an indicator of eligibility to administrate the tPA treatment).

We evaluated all agreements using: 1.

the type of the stroke lesions,

and 2.

the ASPECTS score dichotomized.

For these two variables we evaluated intradevice agreements (i.e.,

agreements between radiologists when interpreting using a single reading system),

and interdevice agreement (i.e.,

agreements for all radiologists when interpreting the same patient images using the two different systems,

i.e.,

MEDICAL-IMPAX and TABLET-XERO).

As a result,

we evaluated the following four variables: 1) intradevice agreement on lesion classification,

2) interdevice agreement on lesion classification,

3) intradevice agreement on dichotomized-ASPECTS classification,

and 4) interdevice agreements on dichotomized-ASPECTS classification.

These for variables were evaluated with the kappa coefficient as a measure of agreement.The kappa coefficients were ranked as defined by Landis and Koch: “Perfect”,

1; “Almost Perfect”,

(1–0.8]; “Substantial”,

(0.8–0.6]; “Moderate”,

(0.6–0.4]; “Fair”,

(0.4–0.2]; “Slight”,

(0.2–0]; and “Poor”,

< 0.

For these calculations,

the software STATA 13.0 (Stata Corp,

College Station,

TX,

USA) was used.

In addition,

the reading software calculates the reading time of each interpretation to perform an equivalence analysis,

for which the software IBM SPSS Statistics 19 (SPSS Inc.,

USA) was used.

5.

Procedure:

Each radiologist read all cases using both,

the MEDICAL-IMPAX and the TABLET-XERO systems.

At each reading,

the radiologist determined the variables mentioned in the section “Observers and reading observed variables”.

The two reading software provides image manipulation tools to adjust window/level,

zoom,

and multiplanar reformation presentation.

These tools were available for all images and could be used at the observer’s discretion to improve image quality.

For each reading session,

the radiologist verified the settings of the contrast and luminance of the display with the RP-133 pattern in a controlled illumination (ambient light of approximately 20 lux according to the American Association of Physicists in Medicine (AAPM) TG18 recommendations).

The radiologist were blinded to the patient and examination identification,

to the original interpretation,

and to the type of lesion.

To ensure that the radiologist were blinded,

a junior radiology resident was charged to get the images into the PACS workstation or tablet using the IMPAX or XERO viewer software.

The data collection was performed using a web-based form and interpretations were stored in a database.

This software presents the patients to be interpreted at random,

and guide the radiologists to complete the report,

assuring integrity and completeness of data.

According to Mullins et al.,

the availability of a clinical history indicating that early stroke is suspected significantly improves the sensitivity for detecting strokes on unenhanced brain CT; whenever possible,

relevant clinical history should be made available to physicians interpreting emergency CT scans of the head. Therefore,

clinical history used in the standard protocol to interpret brain CT with suspicion of acute stroke,

were available to radiologists,

e.g.,

sex,

age,

principal symptoms,

neurological concept,

and relevant conditions (e.g.,

diabetes,

hypertension,

headache,

Parkinson,

Alzheimer,

sleep apnea/hypopnea syndrome,

or cardiac arrhythmia).

This was made to achieve a more realistic interpretation.

Hence,

the only difference with the real practice was the display system.

This information was presented in the web-based collection form before to begin each interpretation.

There were 121 different patients and five months before to repeat a patient for any radiologist.

The readings were performed over the course of ten months in two- or four-hour sessions by each radiologist,

with no time limitations for each reading.

Initial display was using the default image window setting (WW=174 and WL=55),

but radiologists were free to select another window,

as a cerebral or a stroke window (WW=80 and WL=40,

WW=40 and WL=40,

respectively).