Purpose

The Japan diagnostic reference levels (DRLs) published in 2020 are based on the results of a fact-finding survey [1]. However, the DRLs include exposure doses based mainly on surface absorbed doses, which do not take into account re-exposed elements that are not recorded. The focus of this study is the lateral radiograph of the knee joint, which has a simple evaluation criterion. However, perfect positioning of the joint is not easy and multiple exposures may need to be performed before the correct position is obtained....

Methods and materials

1. Definition of terms

Images that do not require re-exposure are termed “Pass”, and images that require re-exposure are termed “NG”.

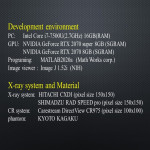

2. Parameter settings

For each of four models (AlexNet, VGG16, ResNet50, and ResNet101), we changed the batch size and input size parameters, executed the program, and verified the accuracy.

In addition, we also modified only the size of each layer without changing the number of layers, and used it with minimal modifications to the original model. The settings described above were verified and the...

Results

1. Two-class classification

1.1 Results of the batch size study for Image Database No.1

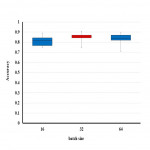

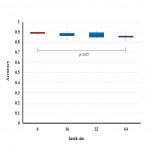

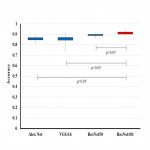

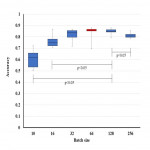

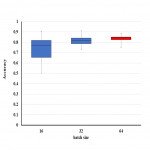

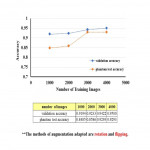

The batch size study was performed ten times for each trial, and the results for image database No.1 are shown in Figs 7-11. The highest accuracy was obtained for ResNet101 with a batch size of 16.

[Fig 7]

[Fig 8]

[Fig 9]

[Fig 10]

[Fig 11]

1.2 Results of the batch size study for Image Database No.2

The batch size study was performed ten times for each trial, and the results...

Conclusion

In this study, the best results were obtained for image classification using ResNet101 with a batch size of 6 and an input size of 256 x 256 pixels. This result does not affect the resolution of the image to be used, to the extent considered in this study.

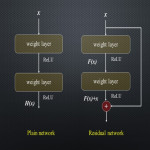

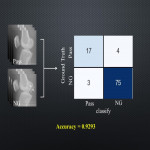

Compared with the other networks, the residual network tended to have higher accuracy with a smaller batch size because that residual networks optimize residue mapping rather than optimizing the original unreferenced mapping. We obtained an accuracy 0.9293...

Personal information and conflict of interest

A. Matsushima:

Author: Nothing disclose for this study.

C. Tai-Been:

Author: Nothing disclose for this study.

T. Okamoto:

Author: Nothing disclose for this study.

S-Y. Hsu:

Author: Nothing disclose for this study.

J. Ryu-I:

Author: Nothing disclose for this study.

N. Itayama:

Author: Nothing disclose for this study.

T. Ishibashi:

Author: Nothing disclose for this study.

K. Fukuda:

Author: Nothing disclose for this study.

References

[1] National Diagnostic Reference Levels in Japan (2020).J-RIME.2020

[2] Kaiming He ,et al, ’Deep Residual Learning for Image Recognition’, 2016 IEEE Conference on Computer Vision and Pattern Recognition,2016.

[3] Selvaraju, R. R., et al. "Grad-CAM: Visual Explanations from Deep Networks via Gradient-Based Localization." In IEEE International Conference on Computer Vision (ICCV), 2017, pp. 618–626.