Learning objectives

To raise awareness of the importance of usable AI design, provide examples of model interpretability methods, and to summarise clinician reactions to methods of communicating AI model interpretability in a radiological tool.

Background

In the past decade, the number of AI-enabled tools, especially deep learning solutions, has exploded onto the radiological scene with the promise of revolutionising healthcare[1]. However, these data-driven models are often treated as numerical exercises and black boxes, offering little insight into the reasons for their behaviour.

Trust in novel technologies is often limited by a lack of understanding of the decision-making processes behind the technology. In medical AI, this problem is twofold - firstly, AI technologies are not widely taught in any medical curriculum...

Findings and procedure details

Design Cycle

“It's just aggravating to have to move and shuffle all these windows… shuffle between the list and your [Brand Name] dictation software… [or] Google Chrome or Internet Explorer, to search for something on there. Everything's just opening on top of each other, which is aggravating.” - UX interview with Interventional Radiologist, USA

The design of the entire user experience of our AI tool has involved radiologists and other clinicians at every step, which has helped generate feedback to ensure that the software is...

Conclusion

The inclusion of interpretability techniques has been well-received through testing in multiple rounds of user interviews, reflecting a demand from the broader radiological community to be able to demystify the black box of AI. Future AI work should involve radiologists at all steps of the design process in order to address workflow and UI concerns, especially as regulatory authorities move towards guidelines that aim to ensure a safer and more interpretable AI future.

Personal information and conflict of interest

C. Tang:

Employee: Annalise.ai

J. C. Y. Seah:

Employee: Annalise.ai

Q. Buchlak:

Employee: Annalise.ai

C. Jones:

Employee: Annalise.ai

References

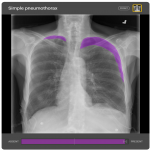

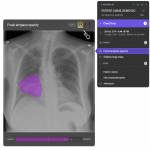

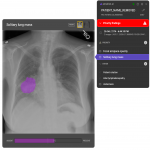

All images are used with permission from Annalise.AI

All chest radiographs analysed here are from MIMIC-CXR 2.0.0:Johnson, A., Pollard, T., Mark, R., Berkowitz, S., & Horng, S. (2019). MIMIC-CXR Database (version 2.0.0). PhysioNet. https://doi.org/10.13026/C2JT1Q.

[1] Hosny A, Parmar C, Quackenbush J, Schwartz LH, Aerts HJWL. Artificial intelligence in radiology. Nat Rev Cancer. Springer Science and Business Media LLC; 2018;18(8):500–510 http://dx.doi.org/10.1038/s41568-018-0016-5.

[2] Recht MP, Dewey M, Dreyer K, et al. Integrating artificial intelligence into the clinical practice of radiology: challenges and recommendations. Eur Radiol. Springer Science...