Business intelligence (BI) refers to applications and practices for the collection, integration, analysis, and presentation of business information. The aim of these software is to allow the user for the easy interpretation of a large quantity of data which leads to a better decision making.

However, these kind of software can be also used for the analysis of medical radiation data. Consequently, we propose to use a BI application as a revolutionary new solution for currently RMS limitations.

1. First step: data extraction

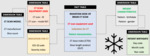

Firstly, these systems collect structured data (data that resides in a fixed field within a record or file) and unstructured data (data not labeled) which is later transform into structured. Structured data has the advantage of being easily entered, stored, queried and analyzed, and it is usually managed using Structured Query Language (SQL), a programming language created for managing and querying data in relational database management systems (Fig. 1).

Furthermore, there are two main approaches of data collection: extract, transform and load (ETL) (Fig. 2); and extract, load and transform (ELT) (Fig. 3). Both strategies are been used as data-mining to collect and supply data to Artificial Intelligence software that are enable to automatically analyse these data and to learn from it.

Radiation monitoring systems gather the information from radiology information systems (RIS) and modalities through the DICOM standard. They are DICOM-driven systems. Nevertheless, the data send to RMS by RIS and modalities are often inaccurate and heterogeneous because data coming from worklists is not always homogenously transformed by modalities. By contrast, BI applications gather data coming from many other sources apart from RIS and modalities and not just through the DICOM standard. In addition, BI applications are enable to transform and integrate all data in an homogenous manner by ETL and ELT technologies so corrupted information is corrected.

2. Second step: data storage and data warehouse, the core of BI applications

BI software obtain data from different sources and integrate it to build a data warehouse (DW). They gather information from radiological equipment, patient’s medical history, RIS, and many other sources, they remove the repeated information, and they convert it into a unified format to be used throughout the institution.

Data warehouses are the core of BI software and they are defined as collection of data derived from operational systems and external data sources. They store current and historical data in one single place uploaded from operational systems after a process of data cleaning (detecting and correcting or removing corrupt or inaccurate records) to ensure data quality.

Prior to continue with the concept of DW, it is properly to define traditional operational databases management systems or On Line Transaction Processing databases (OLTP). They are a kind of databases used to manage dynamic data in real-time, since data frequently changes as updates are made and reflect the current value.

Traditional OLTP are used to manage dynamic data in real-time and they serve as the source of information for the DW. By contrast, DW integrates several OLTP and they organize and present information in specific formats to accommodate the diverse needs of various users in the purpose of data analysis and decision-making (Fig. 1).

Data warehouses are optimized for analytic access patterns. Unlike OLTP which maintain a snapshot of the studied scenario, DW generally maintain an infinite history which is implemented through ETL processes that periodically migrate data from the OLTP over to the DW (Fig. 1).

2.1 Main characteristics of data warehouses

- Topic-oriented: oriented to an specific topic.

- Integrated and consistent data: the way data is extracted and transformed is uniform, regardless of the original source.

- Time-variant: data is organized via time-periods (e.g. weekly, monthly, annually).

- Non-volatile: a DW is not updated in real-time. It is periodically updated via the uploading of data, protecting it from the influence of momentary changes.

2.2 Main components of data warehouses

- Data marts: subsets of the DW usually oriented to a specific work group, task or aim.

- Operational Data Storage (ODS): they integrate information from multiple sources (OLTPs) after cleaning it and resolving redundancy. Unlike in DW, new data coming into the ODS overwrites existing data.

- Staging area: Intermediate storage area between the sources of information and DW.

2.3 Elements of data warehouses

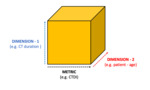

- Facts: the processes we would like to analyse and in which measures occur. For example: a computed tomography (CT) exploration.

- Measures (metrics): a property on which calculations can be made. For example: the radiation dose of a CT scan.

- Dimensions: a dimension is a structure that categorizes facts and measures. They are the different views of the same process. For instance: The model of CT equipment used.

- Hierarchies: hierarchies are logical structures that use ordered levels as a means of organizing data.

2.4 Data model schemas of data warehouses

Data warehouses are developed using multidimensional model schemas:

- Star schema: this model consists of a central fact table which represents the fact we would like to study and which connects to the different possible dimensions of this fact. The fact table consists of the measurable and quantitative data while the dimension table is made up of descriptive attributes that are related to fact data. In this model, each dimensions have just one table (Fig. 4).

- Snowflake schema: snowflake schema results of normalizing the dimension tables in a star schema. In this model, there is also a centralized fact table which are connected to multiple dimensions, but unlike star schema, dimensions tables are also connected to other sub-dimensions tables. It is a more complex model but it needs less space than star scheme (Fig. 5).

Star schema and snowflake schema have much better query performance than traditional databases. They have a small number of tables and clear join paths, so queries run faster than they do against an OLTP system. Small single-table queries, usually of dimension tables, are almost instantaneous. Large join queries that involve multiple tables take only seconds or minutes to run. In addition, when two dimension tables are used in a query, only one join path, intersecting the fact table, exists between those two tables. This design feature enforces accurate and consistent query results.

Furthermore, star and snowflake schemas are easy to understand and navigate because they represent the fundamental relationship between parts of the process we are studying, and the time required to load large batches of data into the databases is reduced due to their structural simplicity.

3. Third step: data analysis and visualization of data

BI applications use online analytical processing (OLAP) tools to be able to answer multi-dimensional analytical queries. OLAP performs multidimensional analysis of data and provides the capability for complex calculations, trend analysis, and sophisticated data modeling (Fig. 1).

Furthermore, unlike traditional RMS, multidimensional databases provided by BI software allow to make searches with completely customisable filters and the visualization of data in helpful dashboards in which multiple dimensions and metrics can be represented.

The databases representations are the OLAP cubes or hypercubes, which are data structures that allows fast analysis of data according to the multiple dimensions that define the problem. In OLAP cubes all possible totals of databases values are pre-calculated, providing instant access to queries too complex for traditional RMS (Fig. 6).

The arrangement of data into cubes overcomes a limitation of relational databases, which are not well suited for near instantaneous analysis and display of large amounts of data. Although many report-writing tools exist for relational databases, these are slow when the whole database must be summarized, and present great difficulties when users wish to re-orient reports or analyses according to different multidimensional perspectives. The use of cubes facilitate this kind of fast end-user interaction with data. OLTP databases are better than OLAP for functions of insert, delete and update, but OLAP are better for functions of select.