Interpreting AI

While most doctors are familiar with metrics such as sensitivity and specificity, which are still used when reporting AI research, there are other metrics applicable to computer science (and also some overlap of terms). To understand this new universe of AI, doctors will need to acquire a new language.

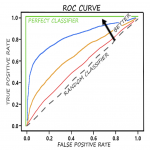

AUC-ROC

Area Under Curve under Receiver Operating Characteristics curve. The curve is derived by plotting the True Positive Rate (or Sensitivity) against the False Positive Rate (or 1-Specificity)9. It is worth noting that 0.5 is the worst score for AUC-ROC, zero would actually mean a tool was perfectly inversley predicting/classifying.

1 = perfect

0.5 = no discrimination

0 = inversely predicting

Pixel Accuracy

The percentage of pixels in an image that are classified correctly. While seemingly simple to understand, it can be misleading and is not commonly used.

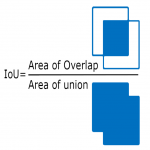

Jaccard Index (or Intersection-Over-Union)

The area of overlap between the predicted segmentation and the ground truth divided by the area of union between the predicted segmentation and the ground truth.

Produces a number between 0-1 where:

0= no overlap (worst)

1= perfectly overlapping (best)

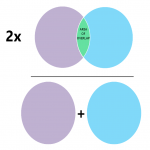

Dice Coefficient (F1 Score)

The definition of the F1 Score depends on whether it is used in a classification task or in a segmentation task.

For classification tasks: Calculated using ‘Recall’ (Sensitivity) and ‘Precision’ (Positive Predictive Value). It can help to think of Recall as pertaining to FALSE NEGATIVES (higher recall means less false negatives) and Precision as pertaining to FALSE POSITIVES (higher precision means less false positives) and F1 is a mathematical function of the two.

F1 = 2 x Precision x Recall

Precision + Recall

For segmentation tasks: 2 x the Area of Overlap divided by the total number of pixels in both images

produces a number between 0 and 1 where 1 is best and 0 is worst

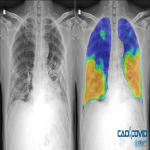

Saliency Maps and Class Activation Mapping

One of the main issues with deep learning is that it is a ‘closed box’. The only way to see if it is working correctly is to run the program and manually check that the result is what you are expecting. However, it is possible for AI to return seemingly ‘correct’ results, even with high accuracy, using the wrong data (e.g. using sarcopenia to accurately predict survival instead of tumour characteristics). This is one of the reasons deep learning techniques can require large and varied data sets.

Often the only way to be able to check on this is with visual representations, such as saliency or class activation maps. Usually depicted in a ‘heat map’ format, this allows the researchers to check that the AI is using the correct area in the image to make its decision