Results:Thirty studies from the emergency department were selected, ensuring a mix of ‘positive’ and ‘normal studies’. This consisted of 10 CT abdomen, 10 CT chest/CTPA and 10 trauma pan-scan studies. These studies had a variety of authors ranging from consultant radiologists (6 reports) to senior (14) and junior registrars (10).

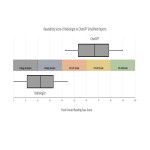

The average FKRE score of the original conclusions was 20.3 (standard deviation 17.1) corresponding to a reading level for university educated graduates. After simplification by ChatGPT, the average score increased to 65.2 (standard deviation 15.7) which is appropriate for a 12-year-old school child to understand, figure 1. However 6/30 reports showed no improvement in readability.

All 30 simplified reports were deemed by both authors to appropriately convey information with no critical additions or omissions within the conclusion. When the original text contained vague or erroneous statements the simplified text accurately reflected this uncertainty.

Discussion:The changes introduced by ChatGPT to create simplified text predominantly relied on reducing or explaining medical jargon. For example, ‘haemorrhage’ would often be substituted with ‘bleed’, ‘intracranial’ with ‘inside head’ and ‘pulmonary embolism’ exchanged with ‘blood clot in lungs’. Occasionally a report would be lengthened in order to elucidate difficult concepts, such as ‘Left PUJ obstruction…favoured to reflect calculus’altered to ‘…blockage where the kidney meets the ureter on the left side’. Example reports are presented in table 1.

Interestingly, one report by ChatGPT introduced a new management recommendation which, while not strictly incorrect, could be considered inappropriate without further clinical details. In a patient with acute appendicitis ChatGPT simplified text stated “…The doctor will likely recommend surgery to remove the appendix”. This was not present on original conclusion (see table 2). By instructing ChatGPT to avoid recommendations or opinions on management, this unexpected addition could be suppressed.

ChatGPT was unable to improve readability in 6/30 report conclusions. Of these, 2 were short conclusions in which simplification was deemed irrelevant (‘no acute intracranial pathology’), while two others were trauma studies with multiple positive findings. In the setting of multiple findings, the sentence length and total number of words is inflated causing an elevated FKRE score. The authors believe the ChatGPT altered texts was simplified for the patient appropriately. An example is supplied in table 3.

The alterations by ChatGPT demonstrate a convincing improvement in readability of the radiology report. While this presumably improves overall patient understanding, we did not investigate the impact of a simplified report on the clinician. A reduction in jargon can make a text more accessible to laypersons, however jargon can also be a useful mode of communication between experts, allowing efficient communication about specialised topics. One could envision a scenario where multiple reports are created and tailored to each relevant participant, so as to maximise their ability to understand and interact with the information provided. The speed and preservation of meaning provided by NLP models may make them uniquely suited for such a task.