Artificial intelligence can be employed not just in image analysis in radiology; it is a vigorous tool that can drastically change daily practice.

However,

AI algorithms can perpetuate human biases,

and there is often a lack of transparency in decision making.

Artificial intelligence and automated decision-making also raise questions about who is liable for violations as in the example of the deadly autonomous car crash in March 2018 [3]. Guiding principles set forth for AI applications in radiology ought to include advancement of public good and social value,

promotion of safety and good governance,

demonstration of transparency and accountability,

and engagement of all affected communities and stakeholders. Therefore,

patients,

radiologists,

researchers,

stakeholders,

and governments must work together to enact an ethical framework for AI.

Primarily,

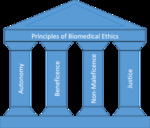

the ethical framework for AI applications in radiology should reflect the core principles of biomedical ethics—autonomy,

beneficence,

non-maleficence,

and justice ( Fig. 1 ) [4].

Moreover,

this ethical framework should also comprise specific issues that AI applications are prone to violate,

such as transparency and accountability [5–8].

Many organizations have already produced statements to guide the applications of AI ( Fig. 2 ) such as the European Commission-drafted Ethics Guidelines for Trustworthy AI [7],

the Asilomar AI Principles [8] and the Montreal Declaration for Responsible AI [9].

Here we would like to suggest an ethical framework tailored for AI applications in radiology,

under the light of core biomedical ethics principles and the statements that were proposed for ethical challenges posed by AI ( Fig. 3 ).

Autonomy

The principle of autonomy means patients have their right to make their own choices.

If patients are incompetent,

e.g.,

children,

patients with a lack of mental ability,

their surrogate would be responsible for their rights.

In healthcare,

informed consent is utilized to ensure the autonomy principle [4].

Medical images contain not just pixel information,

but also additional data such as patient demographics,

technical image parameters,

and institutional information,

namely,

protected health information (PHI).

Depending on the region scanned,

body contours can be rendered,

and even facial recognition could be possible from medical images. To develop and implement AI in radiology,

medical images can be repeatedly used in validation and training processes by algorithms; therefore,

data privacy and data ownership will be questioned [10]. While PHI data access needs to be granted only on a need-to-know basis,

data collection should be audited by institutional review boards to protect data usage and to ensure compliance of patient’s consent [11].

This data access and security need to be implemented in the data handling process. In the new AI era,

informed consents ought to be adapted in a way that also accounts for repeated usage.

In ensuring patient privacy and confidentiality,

the General Data Protection Regulations (GDPR),

which were recently enacted in Europe,

should be followed.

Due to the back-tracking possibilities of data by AI algorithms,

data pseudonymization—along with anonymization—is included in GDPR to enhance data protection [12]. Data collection and usage in AI also raises issues about data breach; therefore,

patient data must be protected from cyber-attacks [13].

Currently,

initiatives such as  healthbank.coop leave the decision to the individual patient who can access which data and under which conditions,

also enabling individual monetary compensation.

healthbank.coop leave the decision to the individual patient who can access which data and under which conditions,

also enabling individual monetary compensation.

Beneficence,

non-maleficence

The beneficence,

“do good,” and non-maleficence,

“do no harm,” principles are closely related to each other,

hinting at being impartial,

avoiding harm to the patient,

or anything that could be against the patient’s well-being [4].

Artificial intelligence applications in radiology should be designed in a way that reflects both principles and improves individual and collective well-being [9].

Potential applications of AI in radiology include optimization of lesion detection,

segmentation,

characterization,

and re-organization of the clinical workflow.

On the one hand,

AI-aided patient stratification would allow faster and more accurate decision-making and improve the well-being of individuals.

On the other hand,

such stratification could be used for commercial,

non-beneficial purposes.

For instance,

insurance companies could stratify patients according to their expected outcomes and raise the fees.

Or,

AI algorithms could be trained on biased data and,

furthermore,

programmed to increase the profits of their designers [10].

Strategies also need to be implemented for how new true positive findings in a retrospective analysis or new false positive findings in a prospective setting should be treated.

Justice

The justice principle requires a fair distribution of medical goods and services [4].

The development of AI should promote justice while eliminating unfair discriminations,

ensuring shareable benefits,

preventing the infliction of new harm that can arise from implicit bias [6,10].

Interests might differ between the AI developer and the AI user,

and some parties could partly follow the ethical code.

Therefore,

ethical AI design needs also to factor in unethical human interventions [3].

Explicability (Transparency and Accountability)

Transparency and accountability principles can be gathered under the explicability principle [5–7]. Artificial intelligence systems should be auditable,

comprehensible and intelligible by “natural” intelligence at every level of expertise,

and the intention of developers and implementers of AI systems should be explicitly shared [7].

Transparency: If an AI system would fail or cause harm,

we should be able to determine the underlying reasons,

and if the system is involved in decision-making,

there should be satisfactory explanations for the decision process.

This process should be auditable by the healthcare providers or authorities,

thus enabling legal liability to be assigned to an accountable body [8,11]. Artificial intelligence algorithms may be susceptible to differences in imaging protocols and variations in patient characteristics.

Therefore,

transparent communication about patient selection criteria as well as rigorous validation of algorithms is required to ensure the generalizability of training datasets to different centers [14].

Accountability: Diagnosis- or treatment-related autonomous decisions that are made by AI may cause issues regarding who is accountable for these decisions as well as open a debate over who will be responsible if an autonomous system makes a mistake—its developer or user [13]. Before broad adoption,

AI applications in radiology should be held to the same degree of accountability as for new medications or medical devices that are at our disposal in radiology [14].

Research ethics for AI

In computer science,

and particularly in AI research,

there is a growing amount of articles published in repositories,

such as  arxiv.org.

However,

due to the lack of peer-review and the questionable nature of data allocation,

those publications might contain errors.

Therefore,

before the implementation of any AI method,

we need peer-reviewed and transparent research.

As any application developed for diagnostic purposes,

AI applications also need to follow the Standards for Reporting of Diagnostic Accuracy Studies (STARD) statement [15].

arxiv.org.

However,

due to the lack of peer-review and the questionable nature of data allocation,

those publications might contain errors.

Therefore,

before the implementation of any AI method,

we need peer-reviewed and transparent research.

As any application developed for diagnostic purposes,

AI applications also need to follow the Standards for Reporting of Diagnostic Accuracy Studies (STARD) statement [15].