Keywords:

Artificial Intelligence, Computer applications, Head and neck, CT, Computer Applications-Detection, diagnosis, Computer Applications-General

Authors:

S. Chilamkurthy1, S. Tanamala2, R. Ghosh2, P. Rao2, V. Mahajan3; 1Mumbai, Ma/IN, 2Mumbai/IN, 3New Delhi/IN

DOI:

10.26044/ecr2019/C-2937

Methods and materials

We considered a scan critical if it has any of intracranial hemorrhage,

fracture,

mass effect and/or midline shift.

We ran deep learning algorithms which can detect intracranial hemorrhages,

skull fracture,

midline shift and mass effect on a training dataset of 21095 scans.

Predictions of these algorithms were used as features and we trained a random forest model to predict criticality of scan.

The probability score output from this random forest model is used to assign a new scan a criticality score in 0-1.

To validate the algorithms,

we have collected all head CT scans acquired in a month (CQ200 dataset) from six centers in New Delhi,

India.

About half the centers were outpatient radiology centers and the other half were radiology departments embedded in large hospitals.

Three independent radiologists read these scans (mean read time 3.25 mins) and marked if a scan was critical.

Their consensus was considered gold standard.

To test clinical efficacy of reordering work list using algorithms,

we ran a simulated random controlled trial as follows.

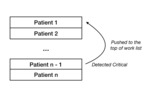

A random queue of 20 scans were generated from the CQ200 dataset to represent usual FIFO reading order of the scans.

This queue is reordered using predicted criticality score so that critical scans are at the top of the queue.

Average time to read gold-standard critical scans was measured for both the queues assuming that a radiologist takes 3.25 mins/scan.

This process is repeated 10,000 times to calculate average reading time of gold-standard critical scans for FIFO and reordered queues.