Keywords:

Artificial Intelligence, Digital radiography, Neural networks, Computer Applications-Detection, diagnosis, Acute

Authors:

J. W. Luo, J. J. R. Chong; Montreal, QC/CA

DOI:

10.26044/ecr2019/C-3491

Results

Classification performance:

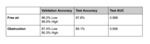

For pneumoperitoneum,

5-fold cross-validation accuracies during training ranged from 96.2% to 99.5%.

The final network epoch was selected for the epoch with the lowest cross-validation loss.

Out-of-sample test accuracy of the network was 97.8%,

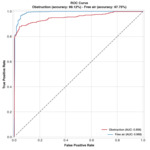

while its AUC was 0.988 (Fig. 2).

For small-bowel obstruction,

5-fold cross-validation accuracies during training ranged from 87.4% to 90.3%.

Out-of-sample test accuracy of the network was 89.1%,

while its AUC was 0.956 (Fig. 2).

Model AUC greatly exceeds the initial model by Cheng et al.

and matches the performance of the refined model Cheng et al.

proposed,

given our smaller dataset and more efficient CNN architecture [4][5].

Training time per epoch was under 100 and 45 seconds for pneumoperitoneum and obstruction respectively.

Average inference time per study was under 1 second.

Heatmap generation took on average 31 seconds per study.

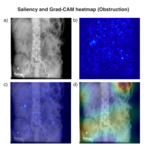

Localization performance

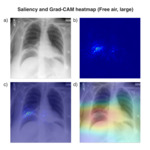

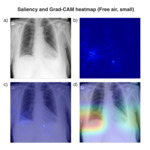

Visualization of the network localization using gradient-weighted class activation mapping (Grad-CAM) demonstrated a promising ability to automatically triage,

detect and select relevant features for acute chest and abdominal radiographs.

However,

there was a significant difference in terms of localization precision and resolution between pneumoperitoneum and bowel obstruction.

On thoracic radiographs,

the network correctly detects and localizes free air to the specific region located below the right hemidiaphragm (Fig. 3, Fig. 4).

This finding held true whether or not the pneumoperitoneum was classified on the report as being tiny or large.

On abdominal radiographs,

however,

the network would oftentimes propose large or multiple foci that are non-specific for the obstruction itself (Fig. 5).

Regions of the lung or stomach would oftentimes be included in the Grad-CAM visualizations as well.

Based on the erratic behavior of the abdominal localization,

we postulate that the neural network may be relying upon localized features such as to texture and brightness to drive classification.

We believe the gap in localization performance is due to the relatively normal view conservation for pneumoperitoneum on chest radiographs,

and lack of consistent anatomic positioning for small-bowel obstruction especially when trained on small datasets.