Reference standard

Eighty-one (27.9%) of the 290 CT studies did not contain any nodules.

The reference standard consisted of 599 nodules in the remaining 209 (72.1%) CT studies.

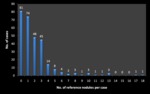

Figure 1 illustrates the frequency of the number of reference standard nodules per scan.

The majority of scans had one (74/209,35.4%),

two (48/209,

23.0%) or three (45/209,

21.5%) nodules.

The median number of reference standard nodules per scan was one,

with a range of 0 to 18 nodules.

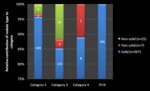

567/599 (94.7%) of the reference standard nodules were solid.

260/599 (43.4%) nodules were Category 2,

135/599 (22.5%) were category 3,

and 9/599 (1.5%) were Category 4.

195 nodules were classified as IPLNS (i.e.

34.4% of solid nodules,

and 32.6% of the total number of reference nodules) (Figure 2).

Overall performance of radiographers and radiologists

Radiographers 1,2,3 and 4 had sensitivities of 67.6%,

77.8%,

79.4% and 61.6% respectively (mean sensitivity 71.6 ± 8.5%).

Radiologists A,

B and C had sensitivities of 88.9%,

87.0% and 74.0% (mean sensitivity 83.3 ± 8.1%).

The average FP rate per case for radiographers 1,

2,

3 and 4 were 1.2 ± 2.1,

2.9 ± 2.8,

0.6 ± 1.0 and 1.1 ± 1.3 respectively,

while that of radiologists A,

B and C were 0.5 ± 0.8,

0.7 ± 1.0 and 0.2 ± 0.5 respectively.

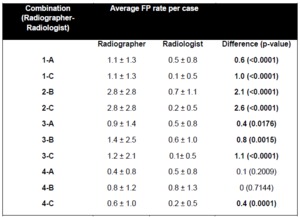

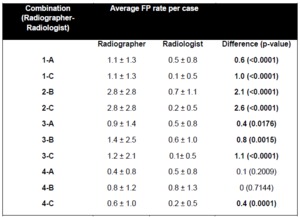

Comparison of radiographer and radiologist performance

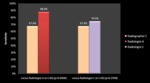

The sensitivities of each radiographer compared to those of the corresponding radiologists within a particular radiographer-radiologist combination are illustrated in Figures 3-6.

Radiographers 1 and 2 could only be compared with their corresponding local site radiologists (i.e.

radiologists A and B respectively) and the central site radiologist (radiologist C).

Radiographer sensitivity was significantly lower than radiologist sensitivity in 7 of 10 radiographer-radiologist combinations (range of difference,

9.7%-32.8%,

p<0.05),

and not significantly different in 3/10 combinations.

The average FP rates per case for each reader in each radiographer-radiologist combination are illustrated in Table 2.

Radiographers had significantly higher false-positive rates than radiologists in 8/10 combinations (range of difference,

0.4-2.6,

p<0.05),

and there was no significant difference in the remaining two combinations.

Table 2: Average false-positive detection (FP) rates per case for radiographers and radiologists in each radiographer-radiologist combination.

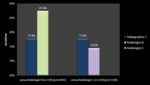

Reader performance in P1 compared to P2

The two radiographers with the lowest overall sensitivity (Radiographers 1 and 4) showed a significant improvement in sensitivity between P1 and P2 (sensitivity 50.0% in P1 versus 74.1% in P2 for Radiographer 1,

41.8% in P1 versus 67.2% in P2 for Radiographer 4,

p<0.005),

but their sensitivity in P2 still did not reach the level of Radiographers 2 and 3,

who showed no significant difference in their sensitivity between the two periods.

Radiologists’ sensitivity did not significantly differ between the two periods.

No radiographer or radiologist demonstrated a significant difference in their average FP rates per case between the two periods.

As such,

the improved sensitivity of Radiographers 1 and 4 in P2 did not come at the expense of an increased average FP rate.