Aims and objectives

Nasopharyngeal Cancer (NPC) is particularly prevalent in southeast Asia.

The correct staging and grading of the tumor is of pivotal importance and guides treatment.

These tasks,

that strongly rely on modern imaging techniques such as MRI,

CT and PET are challenging and time-consuming.

Due to the massive amount of complex data produced,

manually reading and incorporating all of the available information in an accurate,

timely fashion is difficult,

but essential for preventing unnecessary mistakes and rapidly beginning treatment.

Initial results using Deep Learning based approaches...

Methods and materials

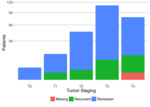

For the study we evaluated a group of 151 NPC patients.

For each patient,

initial imaging included standard and DWI MRI scans are acquired (>100,000 images total) and manually examined by a board-certified radiologist and staged using the standard TNM protocol.

The staging information is then used to decide on one of 4 different treatment plans or stadia (II,

III,

IVabc).

To perform the training the data were divided into a training and validation group.

The training group were augmented using standard imaging augmentation approaches...

Results

The model was able to segment tumor tissue very reliably with over 95% accuracy in the training group and 89% in the validation group scored using the Sorensen DICE criteria.

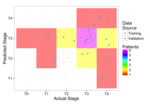

As a second step stagings were derived.

These stagings could were correct for 50% of the patients and over 90% of the validation patients were within one stage of the expert specified result.

Conclusion

While accuracy can be improved,

the ability to fully automatically analyze medical images rapidly at low cost,

can lead to more efficiently extraction of meaningful data from new MRI sequences in physician support systems.

Our approach shows the validity of the method for both segmentation and classification tasks.

We plan on extending this study to larger groups of patients and more diverse sets of images.

Additionally we aim to improve the interpretability and transparency of such approaches to make understanding the results of complex machine...

References

1.

Xie M,

Jean N,

Burke M,

Lobell D,

Ermon S.

Transfer Learning from Deep Features for Remote Sensing and Poverty Mapping.

2.

Cheng J-Z,

Ni D,

Chou Y-H,

et al.

Computer-Aided Diagnosis with Deep Learning Architecture: Applications to Breast Lesions in US Images and Pulmonary Nodules in CT Scans.

Sci Rep.

2016;6:24454.

doi:10.1038/srep24454.