Acoustic noise levels were recorded in the isocenter of a 1.5T Philips Ingenia MRI Scanner (Best,

the Netherlands).

The acoustic noise levels for eight widely used brain MR sequences were measured during their whole acquisition time,

with and without acoustic noise reduction.

The MR protocol included survey scan,

prescans (Coil survey scan,

reference scan for parallel imaging),

T1 weighted spinecho (T1w SE),

T2 weighted turbospinecho (T2w TSE),

Fluid attenuated inversion recovery (FLAIR),

time of flight angiogram (TOF) and a spinecho echoplanar diffusion weighted (SE-EPI DWI) sequence.

Two methods (Softone,

Comfortone) provided by the MR vendor were used to lower acoustic noise levels.

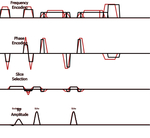

These techniques reduce slew rate (rise time and fall time) of the imaging gradients (slice-,

phase- and frequency encoding) (Figure 1).

Both methods basically work by the same way,

but differ in their implementation and usability.

Softone allows the user to choose a reduction level between one and five.

Comfortone automatically reduces acoustic noise levels by iteration to less than 98 dB SPL.

This value,

predicted by the scanner software,

represents a worst case sound pressure level (SPL) anywhere in the scanner bore.

Table 1 provides an overview of the technique and reduction factor (Softone) applied for the different sequences.

Changes in relevant MR sequence parameters (acquisition time,

repetition time (TR),

echo time (TE),

receiver bandwidth,

relative signal level) were derived from the user interface of the MR console.

Images with and without acoustic noise reduction were acquired from one volunteer for illustration purposes.

|

|

Softone

|

Comfortone

|

|

Coil survey scan

|

|

x

|

|

Reference scan

|

|

x

|

|

Survey

|

factor 5

|

|

|

T1w SE

|

factor 3

|

|

|

T2w TSE

|

|

x

|

|

FLAIR

|

|

x

|

|

TOF

|

factor 3

|

|

|

SE-EPI DWI

|

factor 5

|

|

Table 1: Overview of the applied acoustic noise reduction technique for each sequence.

An optical microphone (Sennheiser MO2000,

Germany) was placed on the left side of a phantom bottle in the head coil.

The microphone was connected to a digital to analogue converter and a laptop running a data acquisition software (PSV 9,

Polytec,

Germany).

The waveform of the microphone signal was digitized with 16-bit amplitude resolution and sampled at 48 kHz.

The recorded waveform was post-processed offline with a custom Matlab script.

A block diagram of the flow of the post-processing is shown in (Figure 2).

The process consists of 3 main stages: 1) data organization; 2) filtration and analysis; 3) data visualization.

In the first stage,

the original waveform of each recording (one for each procedure of a protocol) is split into equal sized time blocks (typically 1 second duration).

In the second stage,

each time block,

as well as the complete waveform,

is prefilled by a high pass filter of 10Hz (Butterworth profile,

4th order) to reduce effects of DC-like artifacts.

Then,

multiple weighting filters (A,

C and ITU-R 468) are applied individually (not cumulatively) on both the individual blocks and the complete waveform.

After that,

the peak and root mean square (RMS) of the time waveform as well as the magnitude of the frequency spectrum of each block or full recording (for each weighting filter) are obtained through a Fast Fourier Transform (FFT) algorithm.

Ultimately,

several analysis results are reported and visualized as:

1) Average and maximum sound level (expressed as RMS and peak dB SPL,

L (A)) for the complete waveform corresponding to an approximate representative value of the average sound level over the whole recording (Figure 3).

2) Level versus frequency and time,

expressed as an audio spectrogram (FFT magnitude spectrum for each time block),

showing the frequency content of the recording and its temporal evolution (Figure 4a).

3) Frequency spectrum magnitude averaged across all blocks,

which emphasizes on the frequency content of the whole recording,

based on repeating patterns within a time window of one block,

while de-emphasizing the contribution of non-repeating events (Figure 4b).