Keywords:

Artificial Intelligence, Breast, Mammography, Computer Applications-General, Cancer

Authors:

J. L. Liu1, R. L. Mimish2, Z. Kellow3, M. Thériault3, J. W. Luo1, S. BHATNAGAR3, B. Gallix1, C. Reinhold1, J. J. R. Chong1; 1Montreal, QC/CA, 2Jeddah/SA, 3Montreal/CA

DOI:

10.26044/ecr2019/C-3512

Results

Network Performance:

The CNN yielded an accuracy performance of 85.0%,

with individual density test AUC’s ranging from 0.935-0.998 (Fig. 2).

On the held-out set of this specific investigation,

the final trained network obtained a 90.8% accuracy when evaluated relative to the original clinical report label.

Inter-Rater Human Reviewer Agreement:

Individual human reviewer agreement with the average consensus was overall excellent,

with individuals reporting Cohen’s Kappa coefficient from 0.91-0.94 (Fig. 3).

While some of this is attributable to the derivation of consensus from the individual rating themselves,

no major discrepancies or outliers were noted amongst the reviewers.

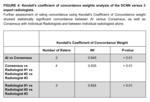

AI vs Human Reviewer Agreement:

IRR analysis with a linearly weighted Cohen’s kappa demonstrated very good agreement (κ=0.87) between the CNN and the mammographers consensus,

however with lesser agreement than the lowest scoring radiologist’s agreement (Fig. 3: Radiologist 3; κ=0.91).

Kendall’s Coefficient of Concordance weight yielded a weight of 0.945 between the CNN and consensus readings (Fig. 4).