Keywords:

Multicentre study, Observational, Not applicable, Quality assurance, Comparative studies, Digital radiography, Thorax, Professional issues, Artificial Intelligence, Chest

Authors:

M. Englmaier1, D. Sasse1, D. Pfeiffer1, M. Kotnik2, L. Lin2, H. J. Lamb2, J. Conradsen3, J. Fløtten3, N. Wieberneit4; 1Munich/DE, 2Leiden/NL, 3Herning/DK, 4Hamburg/DE

DOI:

10.26044/ecr2020/C-05601

Results

The assessment by the readers across all hospitals was generally in fair to moderate agreement (Fleiss’ kappa 0.32 to 0.5), as shown in table 1. The agreement between the two readers from each hospital was in the same order of magnitude, with some exceptions.

Table 1: Kappa values across hospitals (overall) and readers at

respective hospital

|

Image quality

aspect

|

Overall

|

Hospital 1

|

Hospital 2

|

Hospital 3

|

|

FOV North

|

0.46

|

0.57

|

0.42

|

0.21

|

|

FOV East

|

0.5

|

0.48

|

0.27

|

0.49

|

|

FOV West

|

0.43

|

0.36

|

0.17

|

0.47

|

|

FOV South

|

0.49

|

0.57

|

0.51

|

0.46

|

|

Rotation

|

0.32

|

0.08

|

0.42

|

0.37

|

|

Inhalation

|

0.43

|

0.15

|

0.34

|

0.6

|

Interpretation: 0.01 – 0.20 slight agreement, 0.21 – 0.40 fair agreement,

0.41 - 0.60 moderate agreement, 0.61 – 0.80 substantial agreement,

0.81 – 1.00 almost perfect agreement.

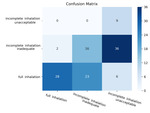

The least agreement in the assessment was observed for patient rotation with a kappa value of 0.32 across sites and values as low as 0.08 within hospitals. This can partly be attributed to the fact that readers not only assessed whether the clavicles where symmetric to the center line of the spine, but also rated the severity of the asymmetry as inadequate or unacceptable. Here, the rating varied considerably, as shown in Fig 5. When evaluating the data only with respect to rating as symmetrical or asymmetrical, the agreement was significantly higher, reaching substantial agreement in some instances (Kappas 0.24-0.73). Similar observations were made for inhalation, as illustrated in Fig. 6. Kappa values were increased to 0.44-0.69, when complete and incomplete assessment were analyzed only, without differentiating between inadequate and unacceptable.

Significant differences were also seen in the field of view, indicated by the distance between lung fields and image edge, especially that perceived as too wide. An example of the ratings in given in Fig. 7.

This would imply a strongly differing threshold between good and unacceptable image quality, if implemented in an automated image quality analysis tool [8]. As this parameter also impacts patient dose, it should be given careful consideration.