Background:

AI has enormous potential to enhance performance in clinical radiology, and ultimately improve patient outcomes in our healthcare system.[1] Many AI models have been validated for their diagnostic performance,[2] however successful real-world implementation of an AI assist device into clinical practice is a complex, multifaceted task requiring further evaluation.

First and foremost, an AI device must positively impact radiologists’ performance and reporting experience, without hindering their overall efficiency. Seamless integration into existing radiology information system (RIS) and picture archiving and communication system (PACS) systems is extremely important, both for efficiency, and also for device acceptance and adoption by the radiologists. Robust device accuracy across diverse clinical settings (eg. patients from community radiology clinics to tertiary level hospitals) and deployability into various technical infrastructures are also important factors to consider.

For successful implementation, early collaboration between AI device manufacturer and radiology service provider is pivotal. After implementation, assessing user satisfaction becomes important to direct ongoing improvement. Pre-determined success metrics are therefore an important aspect of implementation planning.

It is well-known that radiologists have variable perceptions towards AI, and this can be one of the biggest challenges for successful device implementation. There is a high correlation between AI-specific education and positive attitudes towards its use in clinical practice,[3] hence training and education is another key area for successful widespread adoption.

The AI assist device, Annalise Enterprise CXR, which conveys model predictions for CXRs via a widget-style viewer (see Figure 1), has been implemented into the I-MED radiology network which comprises over 250 hospitals and clinics throughout Australia. This AI device has been validated for its performance in detecting 124 findings on CXR [4]. In an initial controlled release pilot study, this device was rolled out to 11 radiologists at I-MED to use as part of their daily report workflow, with radiologists providing per case feedback about its impact on reporting. Network-wide implementation took place following the pilot study, with a further user satisfaction survey being conducted with participating I-MED radiologists.

Methods:

The pilot study was performed over a period of 6 weeks in November-December 2020. During this period, radiologists performed their usual daily reporting caseload, with a mix of on-site reporting and teleradiology reporting in line with the normal working environment of the radiologists. The case mix comprised CXRs from public and private hospitals as well as community-based clinics. The 11 participating radiologists’ level of experience varied with the most senior having more than 10 years of experience, three having 6-10 years' experience and five having 5 years or less.

When the radiologists reported a CXR with the AI assist device available, per-case feedback was collected via a modified device user interface. This modified user interface allowed the radiologist to mark findings they initially missed that were detected by the device, reject device findings, add findings that the device did not detect; and also provide feedback as to whether the device led to significant report changes, patient management changes or further imaging recommendations (see Figure 2).

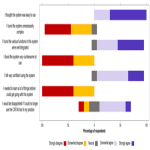

At the conclusion of the pilot study, an additional user-feedback survey was conducted, focusing on whether the radiologists felt subjectively that device use led to increased accuracy, whether they thought the device was well integrated into their existing workflow and easy to use, how it impacted their reporting efficiency, and their overall attitude to the device and the general implementation of AI-based tools in clinical practice after extended use.

A user-satisfaction survey was conducted in March 2021, two months after network-wide implementation. Feedback was collected from a larger sample size of 63 radiologists in total and the results were analysed. The areas of focus in this survey were rate of continued use, overall impact on reporting, satisfaction with user training, whether technical difficulties were encountered, subjective effect on reporting times and level of disappointment if device access was removed.